Hi, everyone! I’m Cailin Plunkett, a rising junior majoring in physics and mathematics. This summer, I’m researching gravitational waves through the Caltech LIGO SURF program.

Electromagnetic (EM) radiation—like infrared, visible light, UV, etc—is the type of data we are used to receiving from space. The first telescopes looked at stars and planets in the visible range, and we have since developed ground- and space-based telescopes that can image the universe in frequencies ranging from gamma rays to radio waves. Gravitational radiation, on the other hand, is a fundamentally different type of information that enables probing the universe and the physics that governs it in novel ways.

In an electricity & magnetism class (e.g. PHYS 117), you’ll learn that EM radiation comes from accelerating charged particles. Analogously, gravitational waves (GWs) are produced by accelerating masses. These waves stretch and compress the very fabric of spacetime. These ripples are only relevant under extreme scenarios, like around black holes or neutron stars (the collapsed cores of large stars, with a few times the mass of the sun packed into the size of a city).

Einstein predicted GWs over a century ago, but only six years ago were any detectors sensitive enough to directly detect them: on September 14, 2015, the advanced Laser Interferometer Gravitational-wave Observatory (aLIGO) network recorded two black holes, 36 and 29 times the mass of the sun, over a billion light-years away, colliding and merging into one. These ripples in spacetime stretched and squeezed the detector arms, changing their lengths by a factor of 10^-21, about a thousandth the width of a proton. The LIGO detectors are the most sensitive measurement devices ever built.

The Caltech LIGO SURF projects span astrophysics, data analysis, and experimental physics, all contributing to some aspect of the LIGO initiative. I’m working in data analysis with a driving motivation of understanding how detector noise impacts our analysis of astrophysical signals. The LIGO data comprises a whole lot of noise—seismic, thermal, quantum, just to name a few—and occasional transient GW signals. To analyze these signals, we need to have an effective mechanism of separating the signals from the noise. While modeling GW signals for different systems has been the subject of many research operations over the last few decades, only recently has the noise been given as much attention.

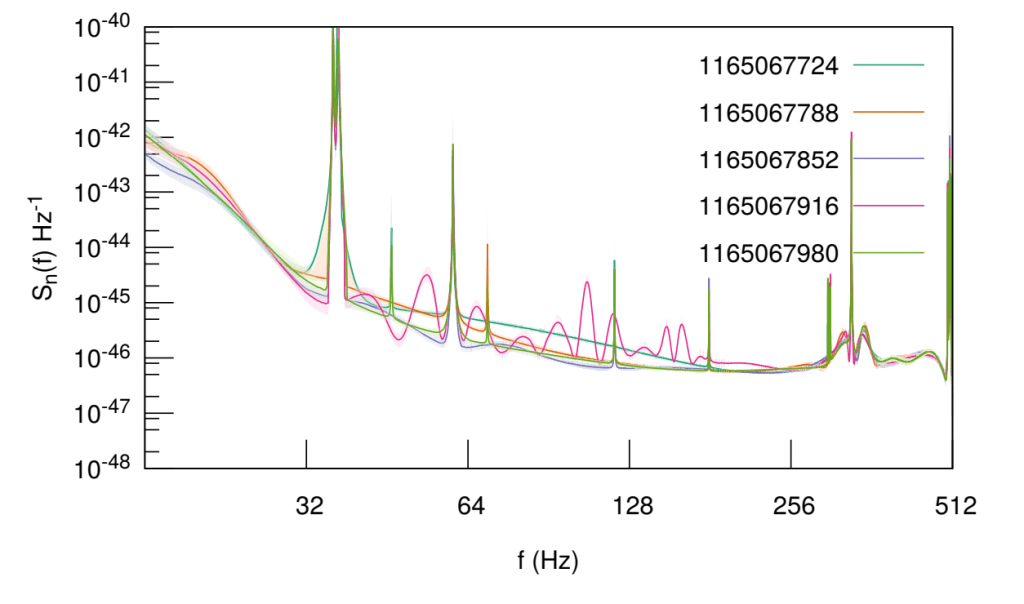

The traditional parameter estimation pipeline has been to estimate the noise using one section of data that does not include a signal, then give that estimate to an algorithm that computes the black hole parameters for a piece of data that does have a signal. However, this sequential method does not incorporate uncertainty and makes several assumptions, such as that the noise does not change over time.

LIGO-Hanford noise as a function of frequency for several time chunks, about a minute apart. Note how the noise changes even over short time periods. Image credit: LIGO, “A guide to LIGO-Virgo detector noise and extraction of transient gravitational-wave signals,” Fig. 7.

A new capability of one of the main algorithms allows it to simultaneously model the noise and signal properties using a single segment of data, mitigating both of those assumptions. I’m quantifying the differences in recovered signal properties when we use the sequential estimation method versus the simultaneous one. The broad-reaching implications of such work include that if LIGO can get more accurate measurements of black hole and neutron star parameters, it can better study their populations and properties, which inform fundamental physics—e.g., whether general relativity breaks down—and the formation of the universe.

In these first two weeks, I’ve been learning the underlying physics of LIGO and understanding the different algorithms at work. I’ve also been getting to know everyone and exploring Pasadena! Now that I’m more up to speed, I’ve started doing “real” computational runs on the data, which take about a day to finish, and am ready to dig into my project and begin full analyses. I’m excited to share my experiences at Caltech this summer with you and look forward to the weeks ahead!

You must be logged in to post a comment.